100+ questions. One platform.

Other engineering analytics tools answer four. Gitrevio answers all of these — through AI chat, scheduled reports, real-time alerts, MCP, or API.

Get started free

Every question below is something you can ask Gitrevio right now — in natural language through our AI chat, as a scheduled report, as an alert trigger, through the MCP server inside Claude or Cursor, or via the REST API. These are not theoretical capabilities. Each one is backed by real data integrations, purpose-built ML models, and AI workers that continuously process your engineering activity. If you can connect the data source, Gitrevio can answer the question.

People

Your team is your most expensive and most valuable asset. Yet most engineering leaders fly blind on people questions — relying on gut feeling for hiring, retention, and performance. Gitrevio gives you data-backed answers about every person on your team, without turning into a surveillance tool.

How long does it take a new hire to ship their first meaningful feature?

What's our average onboarding curve, and which teams onboard faster?

Who are the top mentors on the team based on review quality and junior ramp-up speed?

Is anyone showing signs of burnout — declining velocity, late-night commits, shrinking review engagement?

Who's at risk of leaving, and what's the organizational blast radius if they do?

Are there signs of dual employment — anomalous working patterns across multiple organizations?

Which engineers are silently carrying the team through code review volume?

How balanced is the workload across the team right now?

Who are the knowledge bottlenecks — people who are the sole reviewer for critical services?

What does each contributor's activity profile look like over the past 90 days?

Are new hires getting enough review attention in their first 30 days?

Which team members are context-switching the most between projects?

How does individual velocity compare to their own 6-month trend, not to other people?

Who has the broadest code ownership — and who is dangerously siloed?

Are senior engineers spending too much time on maintenance versus building new features?

How effective are our 1:1 mentorship pairings based on mentee growth curves?

Which engineers have grown the most in scope and impact over the past quarter?

Are any team members consistently working outside normal hours?

What's the collaboration pattern between senior and junior engineers?

How quickly do new hires start reviewing others' code?

What contributor typology does our new hire match? How do similar profiles ramp up?

What process changes would help this team profile most?

Process

Process problems are invisible until they compound. A review bottleneck here, a planning miss there — individually minor, collectively devastating. Gitrevio tracks every aspect of how your team works, from sprint planning accuracy to cross-team dependency patterns, so you can fix processes before they break delivery.

How far off are our sprint estimates from actual delivery — and is it getting better or worse?

Which PRs sit in review for more than 48 hours, and what's the pattern?

What percentage of planned sprint work actually gets completed versus carried over?

Which teams block each other the most, and where are the dependency bottlenecks?

How much unplanned work shows up mid-sprint, and where does it come from?

What's our true cycle time from first commit to production, broken down by stage?

Are our retrospective action items actually changing behavior in the next sprint?

How much time do engineers spend in meetings versus coding each week?

What's the average context-switching frequency per engineer per day?

Which team has the most predictable velocity, and what are they doing differently?

How often do PRs get sent back for rework after initial review?

What's the ratio of feature work to bug fixes to maintenance across teams?

Are code reviews evenly distributed or concentrated on a few people?

What would happen to velocity if we added one engineer to the mobile team?

How long does it take from issue creation to first commit on average?

Which parts of our workflow create the most wait time?

Are standups and sprint ceremonies reflected in actual behavior changes?

How does team velocity trend over the past 6 months — and what caused the dips?

What's the lead time for urgent bug fixes versus normal feature work?

Which cross-team handoffs consistently cause delays?

What's the realistic completion date for Project X? Show me p50 through p90.

Who should review this PR for fastest, highest-quality review?

Code

Code is the artifact of everything your team does. It tells a story about quality, ownership, risk, and technical health that no status update can capture. Gitrevio analyzes your codebase structure, change patterns, and quality signals to surface problems before they become incidents.

Where is tech debt accumulating fastest, and what's the velocity cost?

Which files change most frequently but have no test coverage?

What's the release risk score for this PR based on historical patterns?

Which services have a bus factor of one — only a single person who understands them?

How is cyclomatic complexity trending across our main repositories?

Which modules are most tightly coupled, creating hidden deployment risks?

What percentage of our hotfix commits touch code that was recently refactored?

Which repos have the most stale, untouched code that's still in production?

How much code churn do we have — files that get rewritten within weeks of being written?

What's the test coverage trend per team and per service over the past quarter?

Which pull requests introduce the most risk based on file hotspot analysis?

Are our refactoring efforts actually reducing complexity, or just moving it around?

What's the ratio of new code to modified code to deleted code per sprint?

Which areas of the codebase have the most inconsistent coding patterns?

How often do merge conflicts occur, and in which files?

What's the average PR size, and are larger PRs correlated with more bugs?

Which services have the longest build times, and is it getting worse?

How much duplicated logic exists across our microservices?

What's the code ownership distribution — are critical paths well-covered?

Which dependencies are outdated and pose security or compatibility risks?

What's the blast radius if we change the auth module?

Which files are most tightly coupled to the payments service?

Business

Engineering is a business function. Every feature has a cost, every team has a capacity, and every headcount decision has downstream consequences. Gitrevio connects engineering activity to business outcomes so you can answer the questions your CFO and board actually care about.

How much does a typical feature actually cost us in engineering hours?

Are contractors delivering at the same rate and quality as full-time engineers?

What's the ROI on our recent engineering hires based on their ramp-up and output?

How much engineering capacity are we actually losing to tech debt maintenance?

If we hire three more engineers, what's the realistic velocity impact after onboarding?

What's the true cost of our current review bottleneck in delayed feature delivery?

How does our engineering efficiency compare quarter over quarter?

What percentage of engineering time goes to features versus keep-the-lights-on work?

Can we deliver the Q3 roadmap with current team capacity, or do we need to hire?

What's the cost per story point across different teams?

How much did context switching cost us in lost productivity last quarter?

What's the business impact of our current attrition rate in the backend team?

Are we allocating engineers to the highest-value projects based on actual output?

What's the break-even timeline for a new senior hire on the platform team?

How much runway do we need to complete the migration project at current velocity?

What would it cost to reduce our deploy frequency from weekly to daily?

How does team size correlate with per-engineer productivity in our organization?

What's the engineering cost breakdown per product line?

Are we over-investing in any area relative to its business impact?

How accurately do our quarterly plans predict actual engineering output?

Is this project worth doing given the lognormal timeline distribution?

What's really driving the velocity drop — break down the causes.

AI Impact

Every engineering team is adopting AI tools. Almost none can measure whether they're working. Gitrevio tracks AI-generated code, measures productivity changes, and gives you real data on whether your AI investment is paying off — or just creating a new category of technical debt.

What percentage of our merged code is AI-generated, and is it any good?

Did adopting Copilot actually improve our throughput, or just our commit count?

What's the defect rate of AI-generated code versus human-written code?

Which teams are adopting AI coding tools most effectively?

Are AI-assisted pull requests getting approved faster or slower than human-only ones?

How much review time do AI-generated PRs consume compared to human-written ones?

Is AI-generated code increasing our tech debt or reducing it?

What's the cost per feature when AI tools are used versus when they're not?

Which types of tasks benefit most from AI assistance in our codebase?

Are junior engineers more or less productive with AI tools compared to seniors?

How has our overall code quality trend changed since introducing AI coding assistants?

What's the revert rate on AI-assisted commits versus human-only commits?

Are AI tools helping with test coverage, or are they generating untested code?

How much time are engineers spending prompting and correcting AI versus writing code themselves?

What's the actual ROI on our GitHub Copilot licenses based on measured productivity?

Are AI-generated code patterns consistent with our architecture standards?

Which repositories show the highest AI tool usage, and what's the quality impact?

How has the introduction of AI MCP tools changed our engineering workflow patterns?

Are AI tools reducing or increasing the gap between senior and junior engineer output?

What percentage of AI-generated code survives past the first refactoring cycle?

Every question, six ways to ask

Pick the interface that fits your workflow. Every use case above is accessible through every channel below.

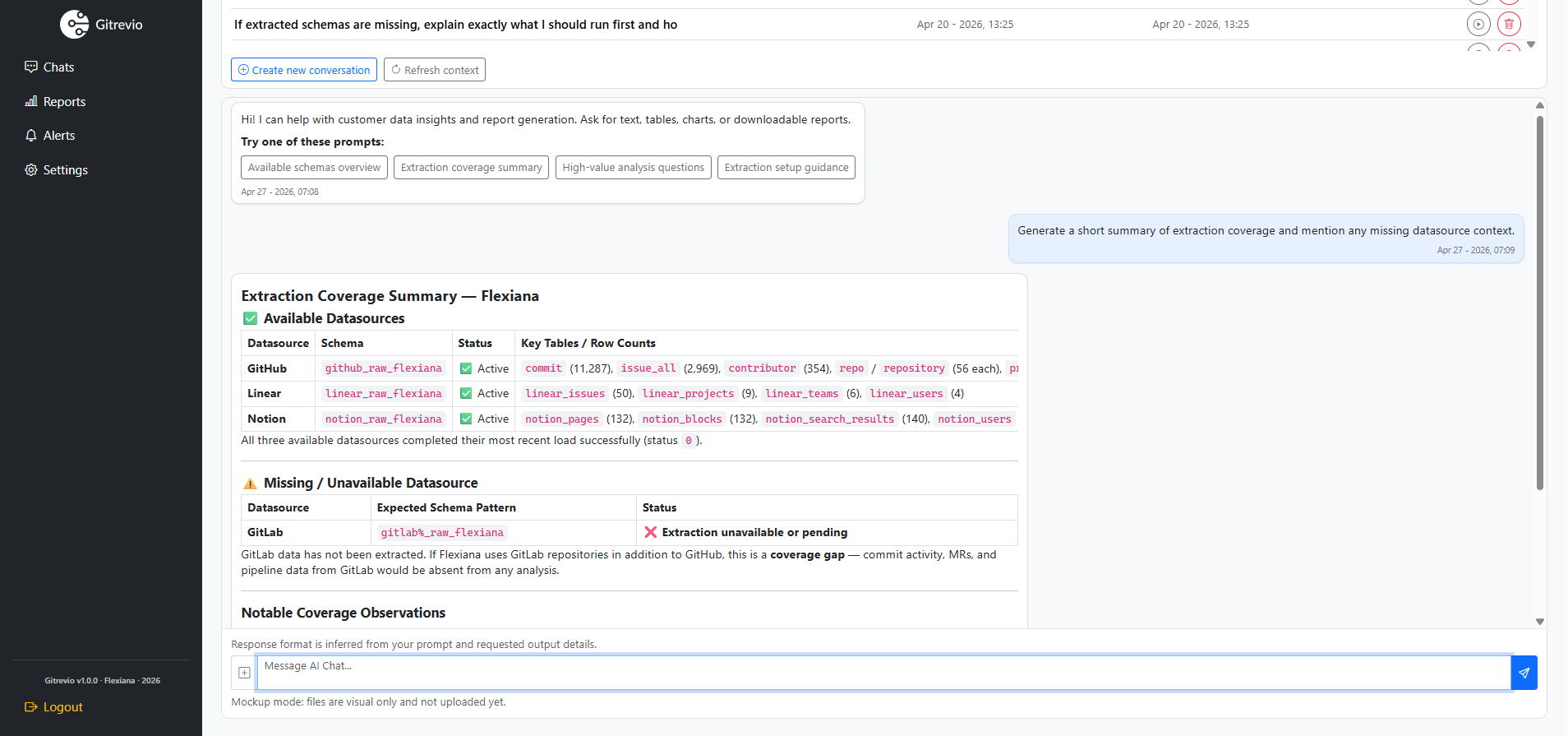

AI Chat

Ask in plain English. Follow up. Get charts, tables, and recommendations.

MCP Server

Use inside Claude, Cursor, or any MCP client. Your AI tools gain engineering context.

Scheduled Reports

Weekly sprint digests, monthly board reports, quarterly trend analysis. Automated.

Real-time Alerts

Define triggers in natural language. Get notified in Slack, Teams, or email.

REST API

Full programmatic access. Build custom integrations and power internal dashboards.

Slack & Teams Bot

Ask questions and share insights directly in your team channels.