Do 30% more

with

the same team

AI-Powered Engineering Data Platform for Real-Time Insights.

GitRevio is an engineering data platform that gives you a clear view of your engineering work. Track progress, spot risks early, and stay on top of performance — without manual work.

Free for up to 19 contributors. $40/mo per IC after that. Every feature included.

Are You Tired of Chasing Engineering Updates?

Most engineering analytics tools give you four DORA metrics and a dashboard.

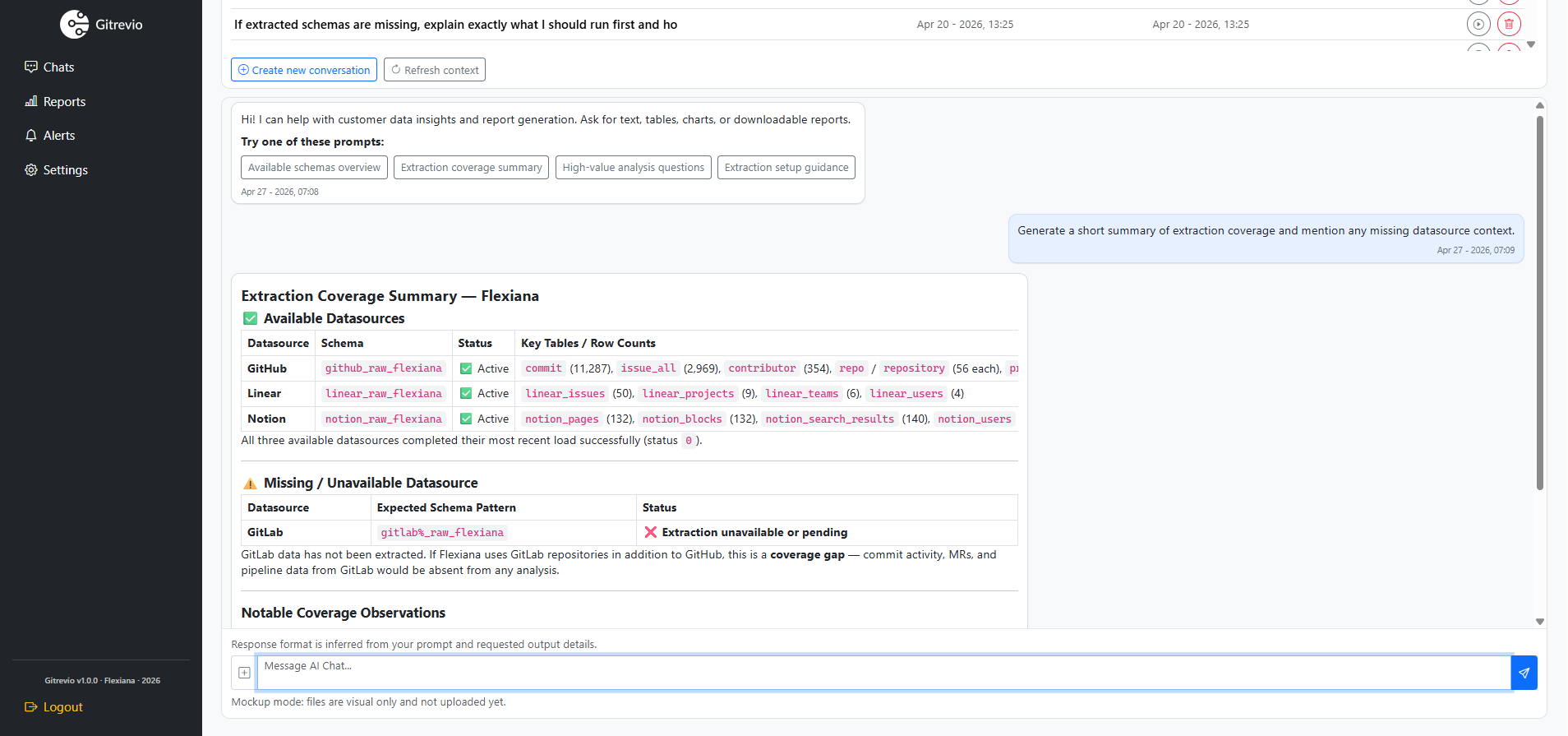

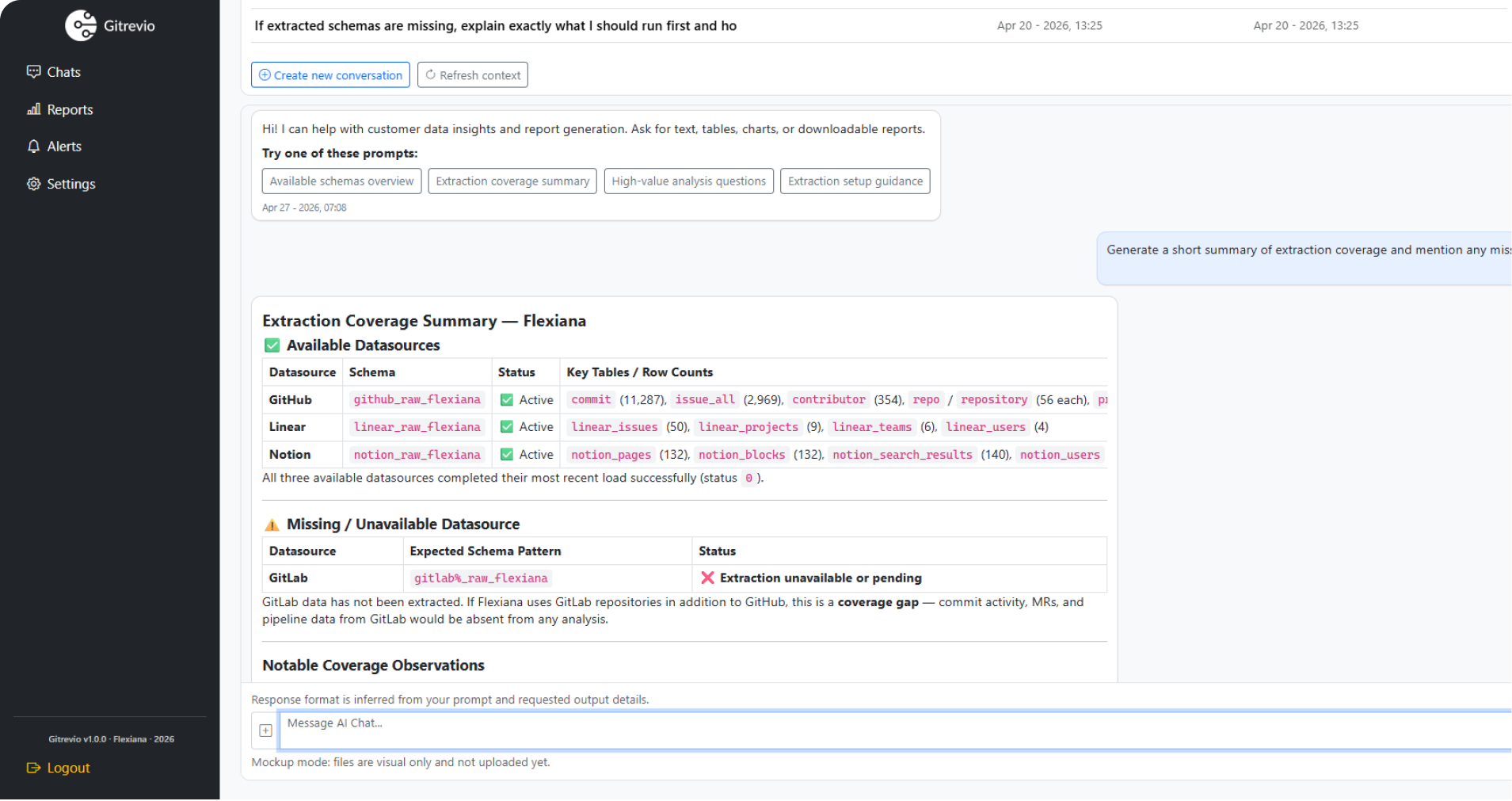

Gitrevio gives you answers to 100+ questions about your people, processes, code, and business — through AI chat, reports, alerts, or directly inside your AI tools via MCP.

No more tool-switching.

Just one clear view.

Stop shifting between tools just to keep track of your engineering tasks. With GitRevio, everything is right there displayed in one dashboard — progress, performance, and any blockers. No extra steps.

Why do engineering teams choose GitRevio?

Engineering data is spread across multiple tools, so it is hard to track progress. Reports are slow and often outdated, which wastes time.

GitRevio brings everything together and turns that data into simple insights—so you remain fully updated, no digging or waiting.

All your engineering insights, in one place.

Unified View

No more switching between tools and dashboards. Engineering reporting platform is one view for everything.

Simple Insights

Monitor progress and spot risks quickly. No deep dives into reports are needed.

Quick Answers

Just ask, and get the information you need on the go. No waiting.

Early Warnings

GitRevio identifies issues before they worsen.

Connect Your Data

Integrate GitHub, GitLab, Jira, Slack, and more into one engineering data platform.

AI Analyzes Everything

The system continuously scans all data to identify insights and hazards using AI-powered engineering analytics.

Get Results Fast

Whenever you need answers or reports, they're just a click away.

Most tools skip the hard part

They connect to GitHub, count your PRs, and show you a chart.

That's step one. Gitrevio does three things.

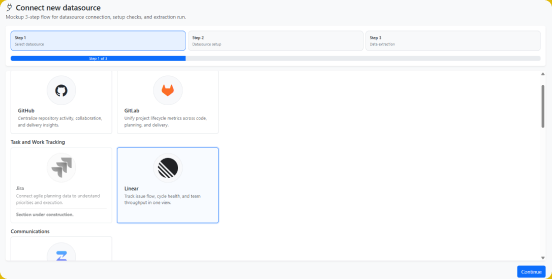

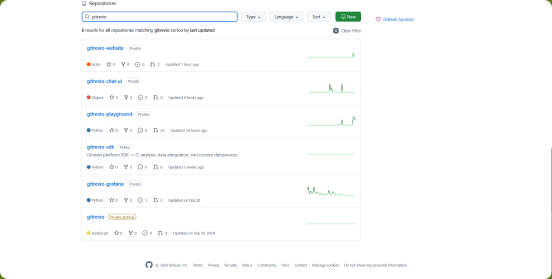

1. Integrate

Connect everything

GitHub (cloud + GHES), GitLab (cloud + self-hosted), Azure DevOps, Bitbucket, and LocalGit for on-premise code analysis are live today, alongside Jira, Linear, Slack, and HRIS connectors (BambooHR, Workday, Personio). Microsoft Teams, Zulip, GitHub Copilot Metrics, and the Cursor Admin API are queued for Q3 2026. PagerDuty, Opsgenie, Datadog, Sentry, and full CI/CD coverage land in Q4 2026.

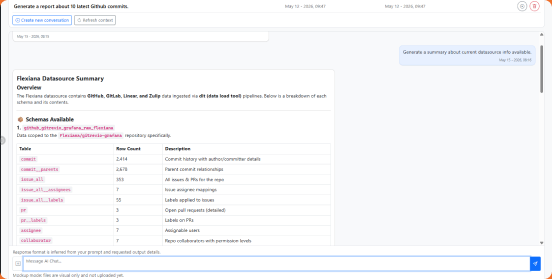

2. Understand

AI makes sense of it

This is what competitors skip. Six AI workers and a library of ML models classify activities, track onboarding curves, score attrition risk, compare plans to reality, detect anomalies, map knowledge silos, and calculate release risk — automatically, continuously. When a metric changes, Shapley-based attribution (with axiom-verified efficiency, symmetry, dummy-player, and linearity tests) decomposes the shift into causal contributions. Lognormal estimation calibrated per team gives p50/p75/p90 timelines instead of gut-feel deadlines. Code blast-radius is computed via formal reachability analysis on your local dependency graph.

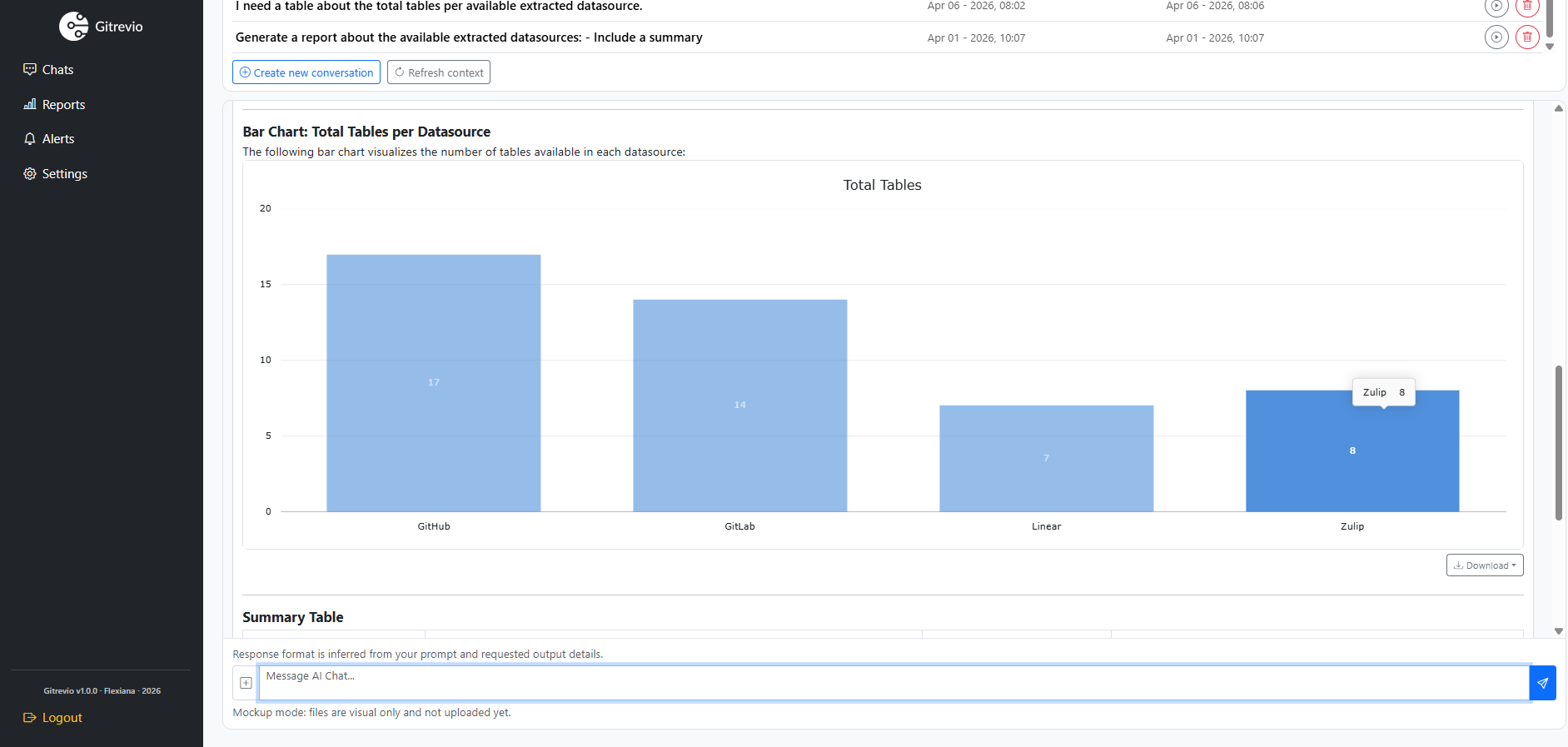

3. Act

Available everywhere

Ask questions in AI chat. Schedule one of 8 report templates. Configure 9 alert rule types. Use our 72-tool MCP server inside Claude Desktop, Claude Code, Cursor, Cline, Continue.dev, Goose, or Aider. Call the REST API. Install one of 30 builtin skills, or ship your own — every skill runs across all five surfaces (API, MCP, chat, reports, alerts).

Clarity for every level

of your engineering team.

-

For Executives

Use a modern engineering insights platform to monitor productivity, investment ROI, and risks.

-

For Engineering Managers

Track sprint delivery, blockers, team health, and planning accuracy.

-

For Team Leads

Improve velocity, code reviews, and onboarding outcome.

Other tools tell you your cycle time is 4.2 days.

Useful, but you already knew things were slow. Here's what Gitrevio tells you that nobody else can.

How long does it take a new hire to ship their first meaningful feature?

How far off are our sprint estimates from actual delivery?

Where is tech debt accumulating fastest, and what's the velocity cost?

How much does a typical feature actually cost us in engineering hours?

What percentage of our merged code is AI-generated, and is it any good?

Who's at risk of leaving, and what's the organizational blast radius if they do?

What percentage of planned sprint work actually gets completed?

Which files change most frequently but have no test coverage?

What's the ROI on our recent engineering hires?

What happens if Sarah leaves?

No tool in the market answers this question.

Gitrevio does. Our What-If Simulator models the impact of team changes before they happen — attrition, hiring, team restructuring, reassignment.

It knows Sarah handles 38% of backend code reviews, owns three critical services, and mentors two junior engineers.

Losing her doesn't just remove one person — it creates a 3-week review bottleneck, orphans 12,000 lines of undocumented code, and derails two onboarding tracks.

Now you can plan for it. Or better — use the attrition risk score to act before it happens.

> What happens if Sarah Chen leaves the backend team?

# Impact Analysis

Code review capacity: -38%

Orphaned services: 3 (auth, payments, notifications)

Knowledge silo risk: HIGH

Affected onboarding: 2 engineers

Estimated velocity impact: -22% for 6 weeks

# Recommendations

1. Cross-train Marcus on auth service (2 weeks)

2. Document payments flow (est. 8 hours)

3. Redistribute reviews to reduce bus factor

# Contributing factors (Shapley)

Engagement decline: -34%

Manager change (Feb): -28%

Comp gap vs market: -22%

Remaining factors: -16%

# Current attrition risk: Sarah Chen

Score: MEDIUM (declining engagement pattern)

# claude_desktop_config.json

{

"mcpServers": {

"gitrevio": {

"command": "npx",

"args": ["gitrevio-mcp"],

"env": {

"GITREVIO_API_KEY": "gr_..."

}

}

}

}

# Then in Claude:

MCP-native. Not an afterthought.

Some competitors recently bolted an MCP server onto their dashboard product. Ours was designed for it from the start.

Every piece of engineering intelligence Gitrevio produces is available as an MCP tool — with role-based access control, 50+ pre-built prompts, and structured outputs your AI agents can reason about.

Use it in Claude Desktop, Claude Code, Cursor, Windsurf, or any MCP client. Your AI coding assistant suddenly understands your team — who reviews what, where the bottlenecks are, what the real sprint capacity is.

This isn't a chat widget on a dashboard. It's engineering intelligence as infrastructure.

See the MCP server in detailOne number. Twenty signals.

The Org Health Score combines delivery velocity, code quality, review efficiency, onboarding speed, sprint predictability, knowledge distribution, attrition risk, tech debt trajectory, and a dozen other signals into a single, actionable number.

It's not a vanity metric.

Each component is drillable. Score drops? You can see exactly which signal moved, in which team, and what caused it. Every week you get a digest explaining what changed and what to do about it.

Your board wants one number?

Give them a real one, backed by data from every corner of your engineering operation.

# Org Health Score "— April 2026

Delivery velocity 82 ↑

Code quality 71 →

Review efficiency 58 ↓ bottleneck in frontend

Sprint predictability 79 ↑

Onboarding speed 85 ↑

Knowledge distribution 62 → 3 silos detected

Attrition risk 88 ↑ no immediate concerns

Tech debt trajectory 65 ↓ payments service

# Payments v2 migration — estimate

# Lognormal estimate (calibrated to team history)

p50 (likely): 6.2 weeks

p75 (buffer): 8.4 weeks

p90 (safe): 11.1 weeks

# Risk factors widening the distribution

Cross-team dependency (platform): +2.3w at p90

Scope creep rate (this team): +1.8w at p90

Key reviewer availability: +0.9w at p90

# Worth doing?

Expected value at p50: $340K ARR impact

Break-even probability: 72%

Recommendation: GO — but staff for p75, not p50

Your estimates are wrong. Here's how wrong.

Software projects follow lognormal distributions — they're rarely early and often very late.

Your team estimates "6 weeks" and means it as a point estimate. Reality is a probability curve: 6 weeks if everything goes right, 11 weeks if it doesn't. Most planning tools ignore this. Gitrevio models it.

For every project, epic, and sprint, Gitrevio fits a lognormal distribution calibrated to your team's actual delivery history.

You get p50, p75, and p90 completion dates — not a single guess that's wrong 80% of the time. The model learns from every completed project: how much scope creep your team absorbs, how often dependencies slip, how reviews stretch in practice.

This changes the conversation from "when will it ship?" to "is this project worth doing given realistic risk?"

A feature with a 3-week p50 but a 9-week p90 needs a different staffing plan than one with a tight distribution around 4 weeks.

Integrate with your stack

The problem with DORA-only tools

DORA metrics tell you how fast code moves from commit to production.

Four numbers. That's the engineering equivalent of judging a company by its stock price — technically a metric, but it tells you almost nothing about what's actually going on.

Gitrevio can answer all of them — and a hundred more.

Because we don't just count pull requests. We understand your engineering operation.

These are the questions that keep engineering leaders up at night.

DORA can't answer any of them.

Why do new hires take four months to become productive?

What happens to three teams' velocity when your senior architect leaves? Are your sprint plans connected to reality, or are you planning fiction every two weeks? Is anyone burning out? Is the AI code your team is shipping as reliable as the code they write themselves?

Got Questions? We Have Answers.

An engineering data platform gathers all of your data so you don't have to jump between different tools to see your engineering data in every aspect.

GitRevio uses AI-powered engineering analytics in the background, so you get clear insights about detecting patterns, summarizing trends, and providing smart recommendations automatically. There is no need for any manual procedures.

Absolutely. GitRevio works with whatever you already use — no process changes needed.

Yes. GitRevio automates engineering reporting with live dashboards, recurring reports, and executive summaries.